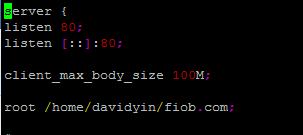

413 Request Entity Too Large – Nginx Web Server

It is a error on Nginx Web Server. Actually happened when I tried to import a large sql backup into MySQL server through phpmyadmin. First, I change the upload limit or max file size on php.ini upload_max_filesize = 100M post_max_size...